How we created our very own 3D AR Bookmark

Written by: Vaibhav

Augmented Reality (AR) has surged in popularity over recent years, given its ability to offer immersive and interactive experiences in various domains.

Tusitala has also been exploring AR and other extended reality (XR) technologies in our projects, so we thought it would be interesting to produce a unique AR-based souvenir that could introduce people to our work. We decided to develop a 3D AR bookmark that links to a selection of our favourite past projects and social media accounts, rather than just redirecting the users to a single website.

To create that, we used the open-source web framework, A-Frame, developed by Mozilla for building AR and virtual reality (VR) experiences.

Getting Image Targeting Right

A common challenge with AR is that AR apps sometimes struggle to understand and adapt to the physical environment. This can lead to virtual objects not properly aligning with the real world, causing unnatural and unrealistic interactions.

For example, AR apps may experience drift in tracking, which means the virtual content overlaid on real-world environments may gradually shift away from its intended position. This is especially noticeable in situations where the user moves around or the environment changes.

To get around this, we made use of visual markers within the bookmark. These markers can be recognised and tracked easily by the AR system, which allows the accurate alignment of markers and content. For our bookmark, we used a simple set of oval layers with a solid background as the markers.

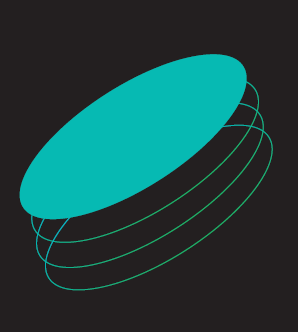

However, we later realised that such simple designs are not recommended for markers. Ideally, a marker or targeted image should be complex and contain many details because that would provide more feature points, which in turn enables stable image tracking.

Image credit: mindar.org

| What an unstable marker image looks like |

Resolving the unstable marker image

A typical marker-based AR relies on the target image (i.e. the marker) to maintain its position: when the target image moves, the AR moves with it.

Since we had an issue with AR system not being able to continuously track and recognise the marker due to its simplicity, we had to stop the image tracking once the AR was triggered and retain its position from where it was triggered. This means that if the bookmark were removed from the camera feed, the AR would retain its position even if the user looks around, which is not ideal.

That’s when we had to use SLAM, which can track the real world while maintaining an AR object’s position.

What is SLAM?

SLAM stands for Simultaneous Localization and Mapping. It is a class of algorithms used in the field of computer vision, robotics, and augmented reality to understand the position and orientation of a device (such as a camera or sensor) within an environment and to create a map of that environment in real time.

Through specific algorithms such as PTAM (Parallel Tracking and Mapping), SLAM enables AR devices to accurately determine their position and orientation within the physical environment – but using data from the device’s sensors (e.g. cameras, accelerometers, gyroscopes, and sometimes depth sensors), without relying on external markers. This is essential for tracking the real world and aligning virtual objects or information with it. Without precise positioning, AR experiences would be disjointed and thus less immersive.

Refining the AR experience

After we used 8thWall’s SLAM engine for our project, the visual marker worked perfectly fine. One problem remained: we wanted the 3D object to appear as though it were emerging from beneath the ground, which required a sense of perspective.

| Before refinement – no sense of perspective |

To solve this, we used hider elements to create an invisible overlay that serves as the ‘ground’, which can hide then reveal virtual content. When the AR animation starts, the hider element makes the 3D objects appear to be emerging from our bookmark.

Making 3D objects interactive

Finally: we wanted to make our 3D objects clickable, such that when users tap on the social media icons, each will open the embedded webpage in a new tab. In this way, we could have multiple links leading people to view different projects and pages.

Here, we used WebGL raycasting. Raycasting can detect what object you are looking at when a user taps or interacts with the object, thus making items ‘clickable’. This is often used for tasks such as object picking, interaction with 3D elements, collision detection in games, and determining what objects are in the user’s view in AR and VR applications.

We created a custom component that traverses the 3D model to look for matches with the part of the 3D model the user has tapped, then call a function that executes the link by opening a new web browser tab. Pop-up instructions were also added to ensure that the AR experience is user-friendly.

Final Product

My colleague Christine brought some of our new AR bookmarks on her recent trip to the Frankfurt Book Fair this October, and they proved to be quite popular!

There’s so much more to create and explore in the world of AR, and I’m excited to see what our next AR project will be.

For those interested, I found a good read for more tips here: Things to note before developing your image-targeted AR.)